Hiring a Software Tester, an Analysis

In May of 2020, back when Promenade Group was still called BloomNation, I opened a job posting for a Software Test Engineer. This was to be the first of many positions we were hiring for. After going through the whole process of hiring a software tester, I thought it would be useful to analyze the applicant data with the idea of learning something about how I hire and about the applicants who applied.

About the data

Some of this data was collected through our recruiting system and some was manually entered in by me. I spent a good deal of time crunching through raw data in Excel, then coming up with new questions and going back to find more data. Some of the data wasn’t captured at all and so I made guesses / assumptions. Specifically I did this for the applicants location and gender. I don’t hire based on gender, but I was curious to see how this might have effected the final outcome. Despite having 142 submissions, I ended up pulling data on only 107 resumes.

Background on the job and description

At the time COVID was just spreading in the US. Our engineering and product teams were working from home and I was looking for someone who was local and able to commute into our office in Santa Monica. That was then.

The job description was written by me, not a recruiter. It’s direct and honest about what I’m looking for in a software tester:

Software Test Engineers should have a good understanding of exploratory testing and how it is applied to all kinds of different interfaces. You should be comfortable in testing new features, the designs behind those features, the code and the software itself using a risk-based mind set for structuring your testing.

You understand the impossibility of complete testing better than most and are constantly striving to gather relevant information about risks. Maybe you’ve heard of the modern testing principles and you’d rather work in an environment focused on flexibility and agility rather than a traditional test management environment.

You’ll be expected to create test artifacts as appropriate, build a test strategy, use the appropriate test techniques, explore all relevant interfaces of the application, design and execute tests, do some regression testing and identify, investigate and report bugs.

Findings

Now for the main attraction, the data!

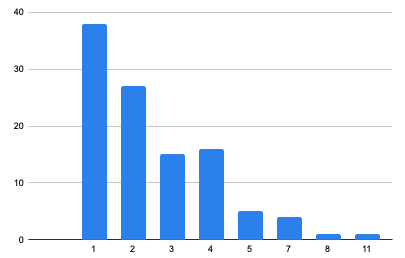

1. Many inexperienced applicants

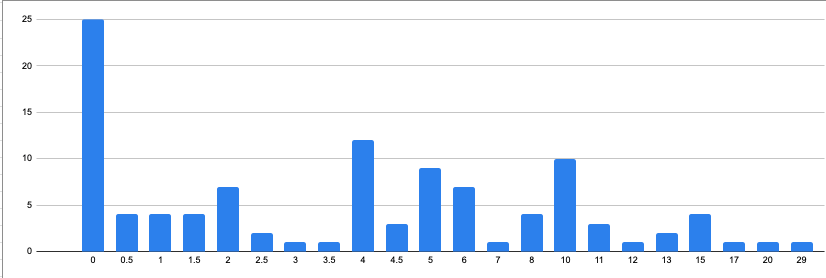

I was hiring for a mid-level position with at least 3 years experience. Somehow I managed to leave out the minimum number of years experience (yikes!) on the job description. The is what the distribution ended up as:

Nearly 43% of applicants were below the 3 year minimum I was hiring for. This was a lot of noise to weed through. I suspect had I included the 3+ years minimum a lot less (but not all) unqualified people would have applied.

When it came time to interview potential candidates, I selected a wide range of experiences from 3 to 29 years. In the end the person I hired had 4 years of experience.

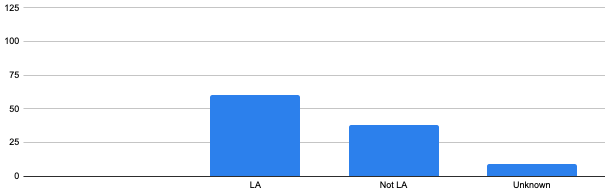

2. Location Mattered

The goal back in May was to hire someone who, once this whole pandemic blew over, would come to work with us in our Santa Monica office. This information was in the job description and on the application.

Yet many people didn’t pay attention to the fact the job said you would need to eventually work in our office:

More than 40% weren’t located in the greater Los Angeles area. This isn’t to say they wouldn’t have moved, but that wasn’t my expectation. In the end, the person I hired (go figure) was in the greater LA area. I actually got to meet her briefly in person on her first day when she picked up her equipment in the office.

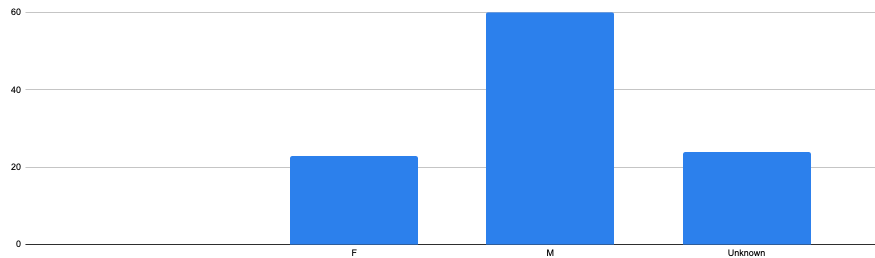

3. Gender discrepancies?

As a I said above, I didn’t interview based on gender. I had no baseline data to compare this against (I know tech is skewed towards males but I have no idea how much). However I was hoping to see some pattern in how appealing the job description was in terms of raw applications.

This data was tough to gather because I made a lot of assumptions based on names and people I interviewed. In the end I was able to determine gender for only about 25% of applicants.

Of the candidates I interviewed: 10 were women, 18 were men. The candidate I ended up hiring was female but at least 60% of applicants (based on my guesstimates) appear to be male.

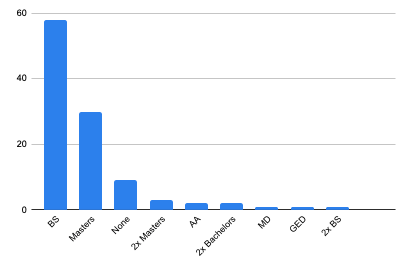

4. Not a lot of testing education

There was no education requirement in the job description. I’m more interested in the person being a capable tester then having gone to a specific school, etc. However most everyone includes some educational background in their resumes so it was easy to gather.

There are some interesting numbers here:

- 54% of applicants had a Bachelors degree

- 8.5% had no degree of any kind.

- At least one Medical Doctor applied (but I didn’t interview them).

Of those I interviewed, the educational range was No Degrees to Double Masters. The candidate I hired had a Bachelors.

As a teacher myself I looked at how many applicants had any kind of Testing Education on their resume. Simply saying you took a testing course or went to a conference counted:

- About 16% did

- The person I hired had some testing education

- About 21.4% of applicants I interviewed had some kind of testing education which was higher than the overall population.

5. Don’t make me read long Resumes

The number of pages in a resume are a big deal for a hiring manager going through 100+ resumes. Anything over a certain number of pages takes too long to read and understand.

Besides your resume should tell the story of you. Sometimes those stories are too long or not written well enough but they all say something about the candidate.

Early on in the hiring process I read resumes and scheduled interviews with candidates who had resumes longer than 3 pages. However as time went on I seemed to only interview applicants with 3 or less pages. The person I hired had a 2 page resume. I didn’t interview anyone with a resume over 5 pages. 25% of the resumes I received were longer than 3 pages.

Resume Trends

One of the most intriguing reasons to spend all this time going through resume data was to (hopefully) identify commonly used words. Trends around the words we use to describe the kinds of work we do, such as test techniques, buzzwords and even the documentation like Test Cases.

It gets a bit fuzzy here because I didn’t know what kinds of things would show up, nor how to truly classify these things. Next time I do this, I’ll look for certain buzzwords and not cast such a wide net.

Most common test techniques

This was a fun one, the most commonly listed Test Techniques were:

- 82% of resumes: Performance Testing

- 42% of resumes: Regression Testing.

- 36% of resumes: Functional Testing.

- 23% of resumes: Automated Testing (although not a technique).

- 21% of resumes: API Testing.

My money was on regression testing taking the top spot so the upset by performance testing was a surprise. I’m also surprised the percentages for functional and regression testing aren’t much higher.

Fun fact: the job description mentioned taking a risk based approach to testing. Risk based testing was mentioned in 2 resumes and I interviewed both candidates.

Most common documentation

- 36% of resumes: Test Cases

- 30% of resumes: Test Plans

- 15% of resumes: Test Scripts

Similar to my assumptions about common techniques, I’m a bit surprised at how few times these words showed up in resumes. While I personally don’t use the word test cases, my guess was that most people do. Yet here we are and so few mention it. Perhaps due in part to the large number of people who applied with little or no experience?

On one hand I’d like to believe candidates are able to tell such an effective story in their resume without these words. On the other hand, that hasn’t been my anecdotal experience with phone interviews.

Most common buzzwords

Lots of words and phrases end up on resumes. For lack of a better word I wanted to know what other things I saw frequently:

- 30% of resumes, SDLC

- 30% of resumes, Agile

- 15% of resumes, Blackbox

- 13% of resumes, STLC

I have yet to see a resume where the use of the terms Software Development Lifecycle (SDLC) or Software Testing Lifecycle (STLC) added value. That’s not to say someone couldn’t have a compelling story using them, I’ve just never seen it. A resume should tell a story about what the person has done and maybe what they hope to do. Given limited time and space it’s important to be choosy with words.

Closing Thoughts

It turns out it was useful to analyze this interview data for patterns and/or surprising data. Some of the data backed my own conclusions such as:

- I wasn’t reading long resumes and

- Favoring candidates in the Los Angeles area

Some of the data taught me something new such as:

- Favoring candidates who showed evidence of testing education and

- 43% of my applicants were below my minimum experience threshold

Much of the Resume Trends data was fascinating and gives me a basis for collecting this data the next time I hire. Between now and when I started gathering this data (about 7 months ago), I hired again. While that data waits to be crunched I fixed the minimum threshold problem, and saw a reduction in the number of inexperienced candidates applying.

Member discussion