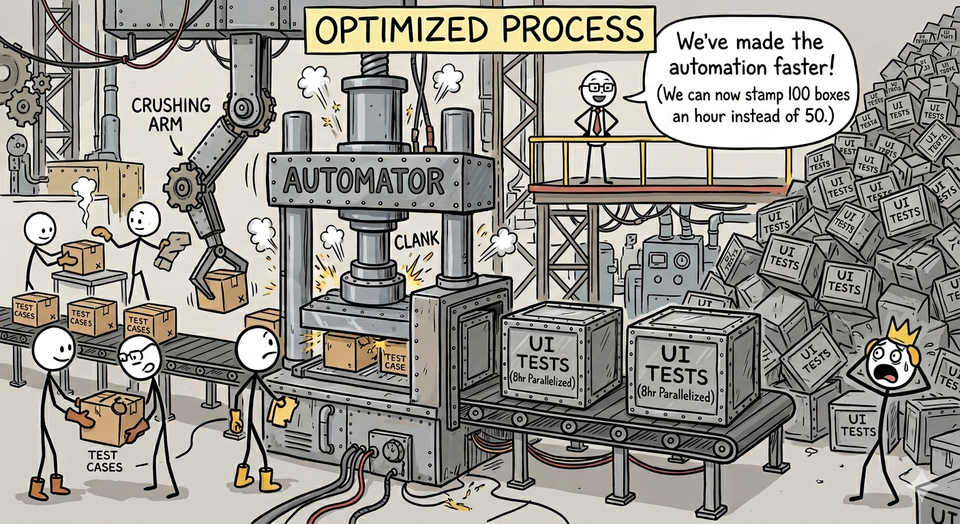

Optimizing the wrong part of the testing process

Over the years, as an interviewee, interviewer, and through casual conversation, I’ve talked to many teams who described their test automation process as:

Testers write test cases. SDETs or Test Automation Engineers pick up those test cases and turn them into automated tests.

The company I just joined does this. They’ve done it for years. Now they have 2,500 automated tests, written in Cypress, almost all running at the UI. It takes a little over 45 hours to run all the tests sequentially and over 8 hours parallelized.

They also built the process so all of their testers (who don’t write test code) continue to add test cases to the automation team’s backlog. Now they have over 2,500 automated tests running and another 3,000 planned!

Optimized for What?

Just because something is optimized doesn’t mean it’s headed in the direction we want to go. In my newly inherited position, the testing processes are optimized to produce a lot of test artifacts, called test cases. They’re also optimized to produce one variation of test case: scenarios. (Scenarios are just one way to design a test, not the best and only way). Testers create a scenario test, run the scenario test and assume the scenario test is best to be automated.

The automation team usually agrees. They’ve designed most of the Cypress automated tests as scenarios. A small number are API tests. An even smaller number are engineered to seed data and use APIs to speed things along. They’ve done the best with what they have and it’s not all bad.

Optimization Problems

However, this optimization for outputting test cases rather than for outcomes (product risk) is a problem. We want to have automated tests that deliver timely and actionable feedback to our teams. You can’t do that with an 8-hour runtime, let alone 45 hours.

The idea that all test cases should be automated is a fallacy. You’ve got to know how to build good systems to detect problems, not just record manual steps.

Good Foundation

I’ve got 99 problems and intent ain’t one - Definitely not Jay-Z

Many of these inherited processes have problems and are focused on the wrong thing. The good news is the team cares and works hard. Their intent is to do good work.

Now one of my many tasks is to take these unsustainable practices (imagine that suite doubling to a 90-hour runtime) and prune them. There are several things that need to happen:

- Break the mandatory link between creating test cases and adding them to the automation backlog:

- There needs to be some ingestion practice so we can add valuable things to the backlog but it shouldn’t be automatic

- Build an automation strategy that explicitly makes adding new tests below the UI a consideration and priority.

- I might even consider making it a requirement that you have to justify adding automated tests at the UI

- Prioritize the backlog based on our automation strategy and document what good and bad examples of automated tests are.

- Educate my testers on how to operate so we’re looking first to Product Risk and Regression second. Rather than simply focusing on test artifacts.

- Rebuild the optimization process, around risk detection and mitigation.

This is going to be a very fun and very long rebuild.

Member discussion